Interest Area and Context

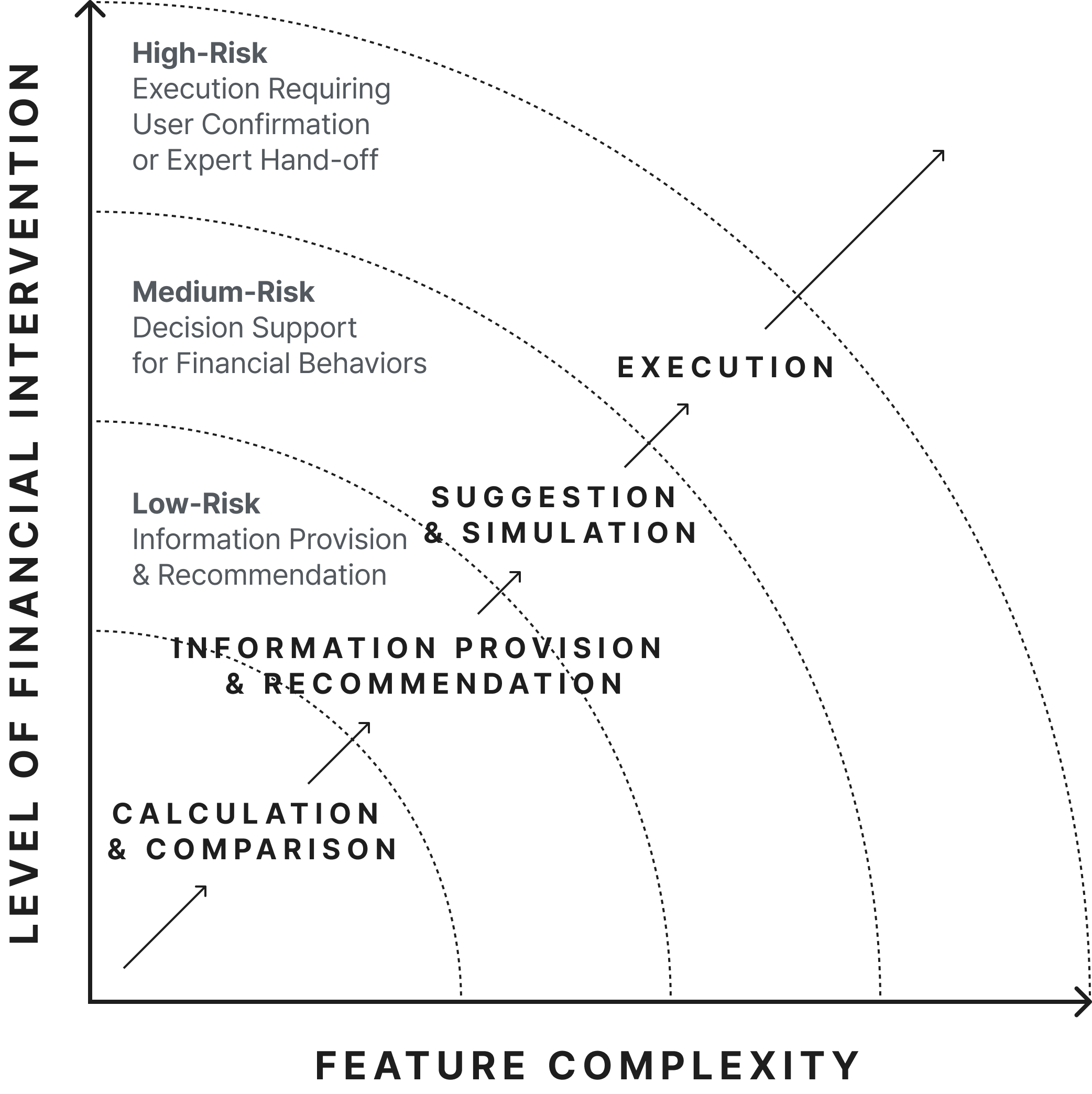

In the sensitive domain of finance, the concept of AI automation carries unique risks. Our initial exploration revealed that users overwhelmingly prefer a manual invocation model—they want to actively request help from the AI. "Automatic intervention" by the AI was quickly perceived as a "loss of control" and triggered strong user resistance.

While users were generally open to AI for simple information retrieval or narrowing down options, they demanded much stronger assurance and strict manual control for actions involving actual money transfers or long-term financial decisions.

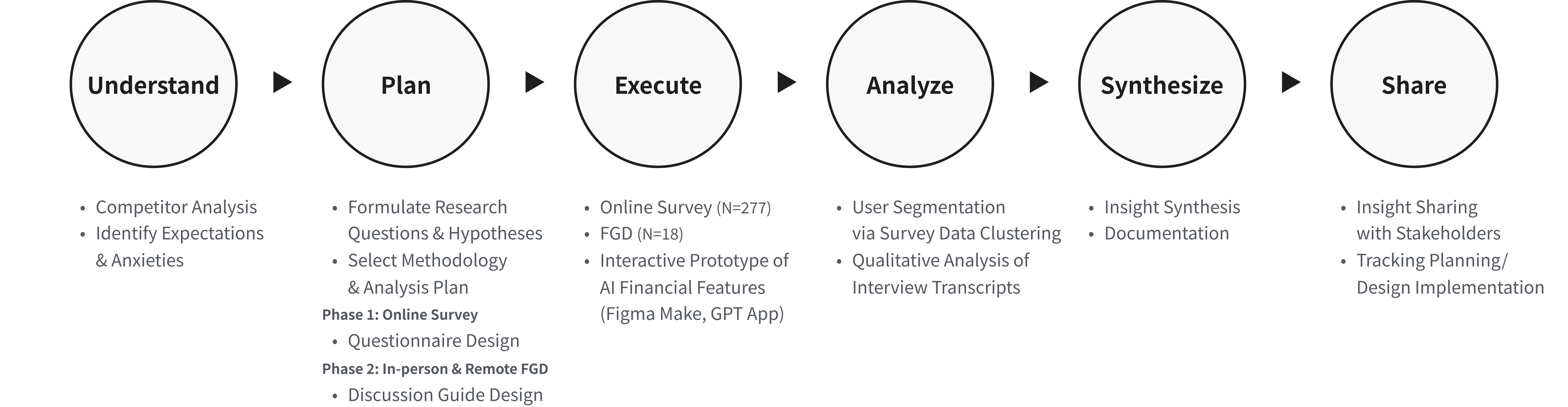

User Research & Prototyping

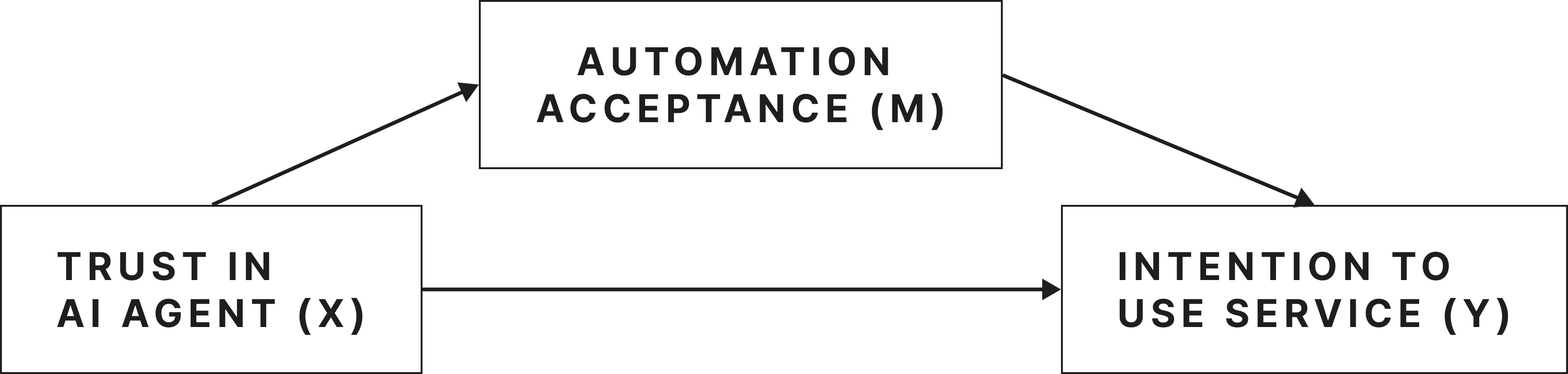

To understand these boundaries, we conducted an online survey (N=277) and Focus Group Discussions (N=18) using interactive prototypes of AI financial features. We evaluated user responses based on two main axes: the level of task risk and the degree of user control.

Problem Statement

We found that for users, AI automation in finance is not just a matter of functional convenience; it is deeply tied to their sense of control and accountability. The challenge was: How do we design an AI agent that reduces the burden of complex financial tasks without making the user feel like they have lost control over their own money?

Design Value and Direction

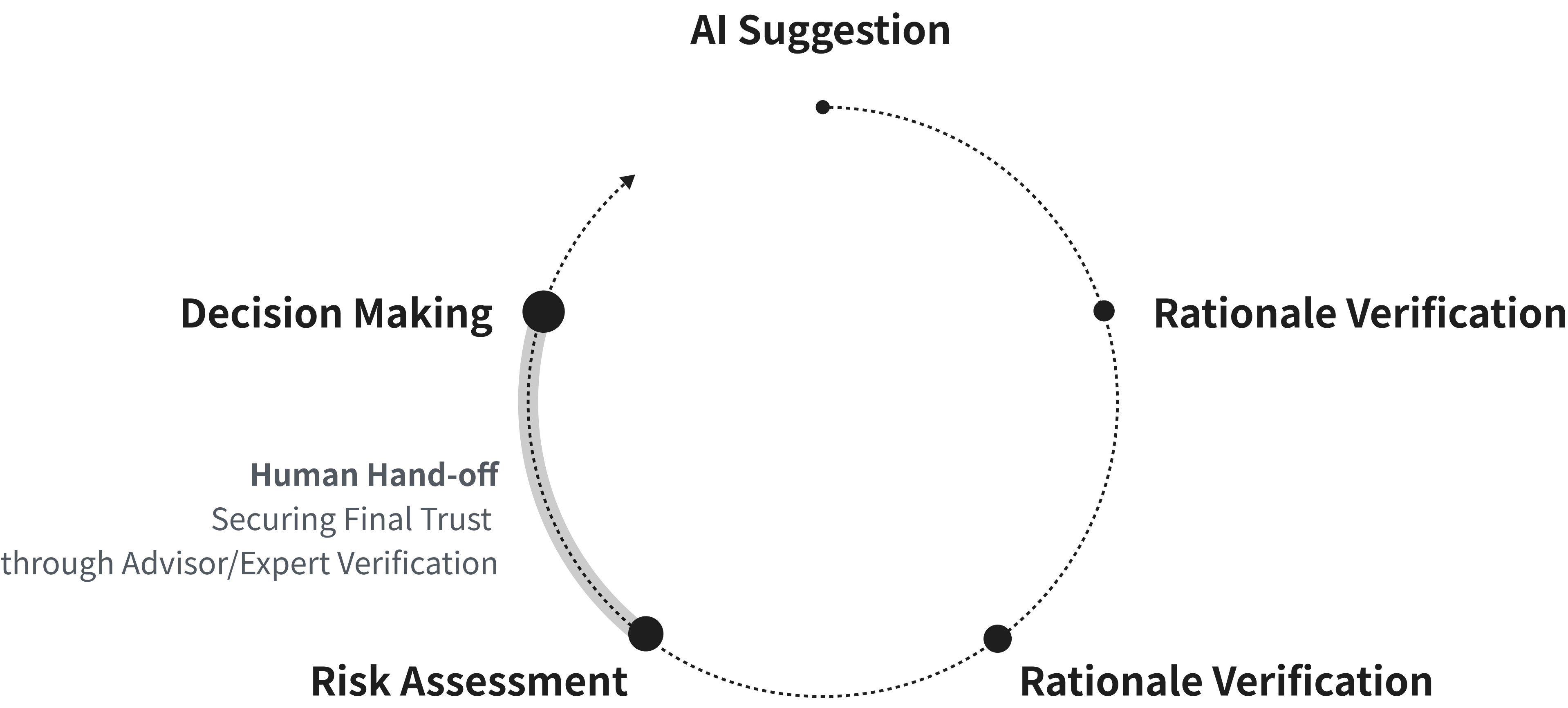

Users clearly perceived AI not as a final decision-maker, but as an 'assistive tool for preliminary judgment.'

Based on our findings, users preferred a collaborative approach where AI organizes complex information first, allowing them to verify the rationale before execution. We defined the following UX principles:

- Rationale-First Explanations: Show the data and conditions behind the AI's answer before showing the final result.

- Separation of Suggestion and Execution: Always provide a step where the user can review, modify, or hold the AI's suggestion.

- AI as a Tool, Experts as a Safety Net: Provide a seamless transition to a human expert when making high-risk decisions, positioning experts as a necessary "safety net."

Strategic Roadmap

We proposed a phased integration strategy to gradually build user trust:

- Phase 1: Safe Helper — Focuses on building foundational trust by assisting with low-risk tasks and demonstrating analytical value.

- Phase 2: Smart Advisor — Introduces personalized financial advice with explicit user review and confirmation steps.

- Phase 3: Proactive Partner — Envisions the AI proactively detecting risks and offering personalized suggestions, carefully balancing the tension between "resistance to automation" and "expectations of utility."